Interactive models

Explore AI competition dynamics directly

Most AI governance research is written down. We turn AI competition models into interactive tools, so researchers and policymakers can experience when safety incentives hold up, when they break down, and how modeling assumptions affect the results. We think this is a more concrete, memorable way of understanding the dynamics of AI competition risks.

Strategic safety analysis

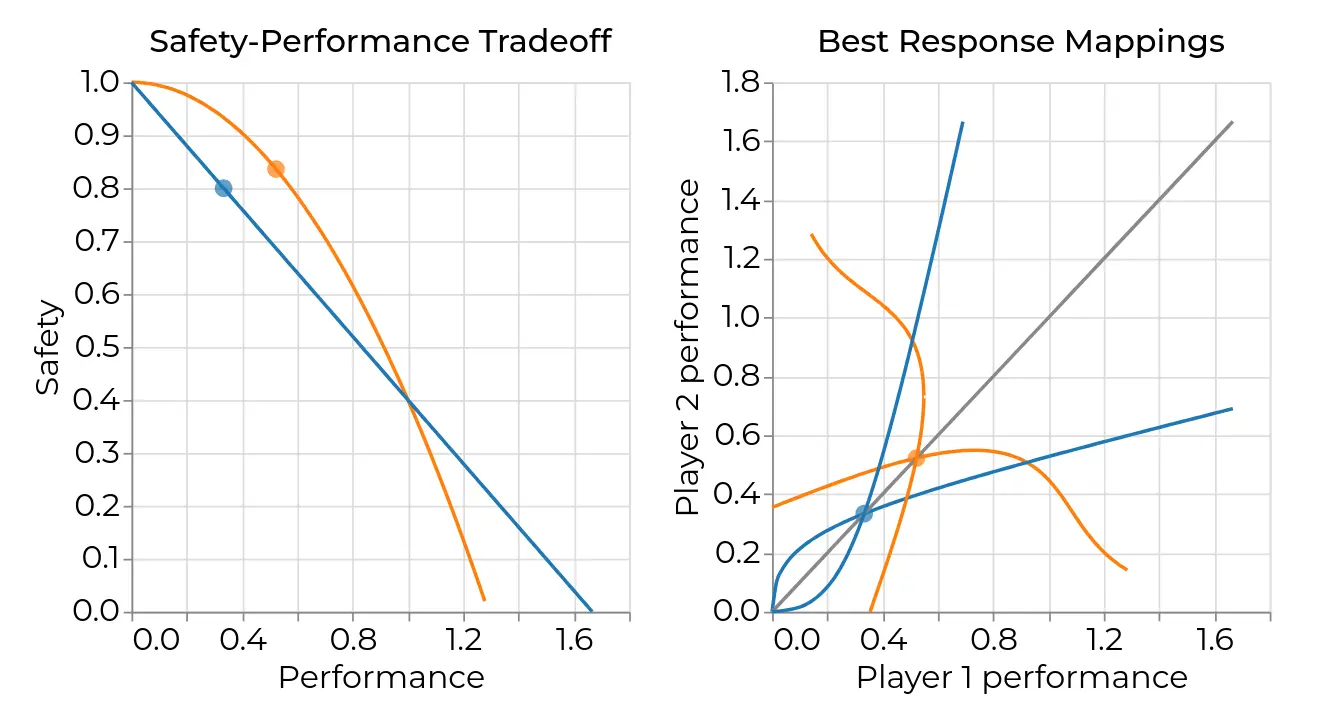

Safety-Performance Tradeoff web app

Built in collaboration with Professor Robert Trager, co-director of the Oxford Martin AI Governance Initiative, the SPT web app helps users explore when better safety insights reduce risk, and when competition can still push AI developers toward dangerous outcomes.

It implements a game-theoretic model of how safety knowledge affects the choices of competing AI developers. Users can adjust parameters, start from built-in presets, and compare the results visually.

Dynamic AI competition model

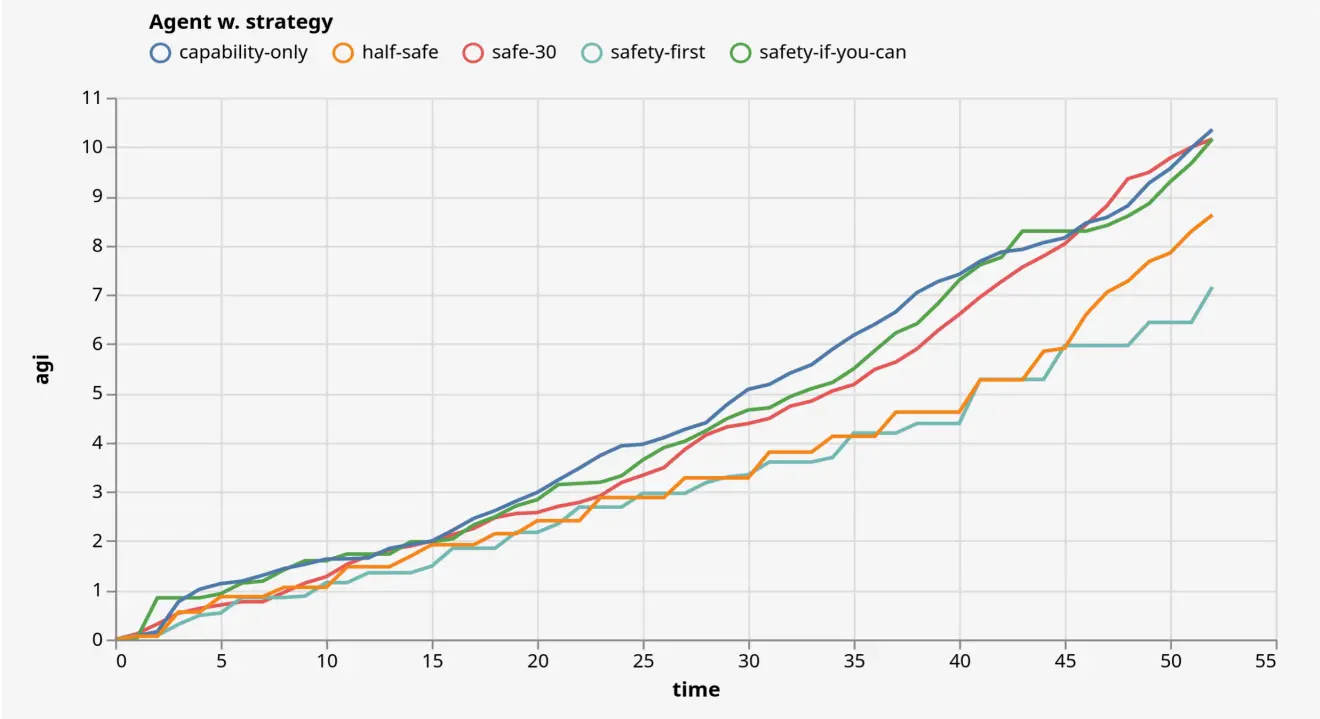

Baseline web app

The Baseline web app lets users explore how AI competition changes when decisions unfold over time rather than in a single move.

It extends the static Racing to the Precipice framework into a dynamic model, drawing on game theory, economics, and AI safety research. Users can experience how assumptions about AI competition, capability growth, and safety investment interact, and where the results differ from the simpler static case.

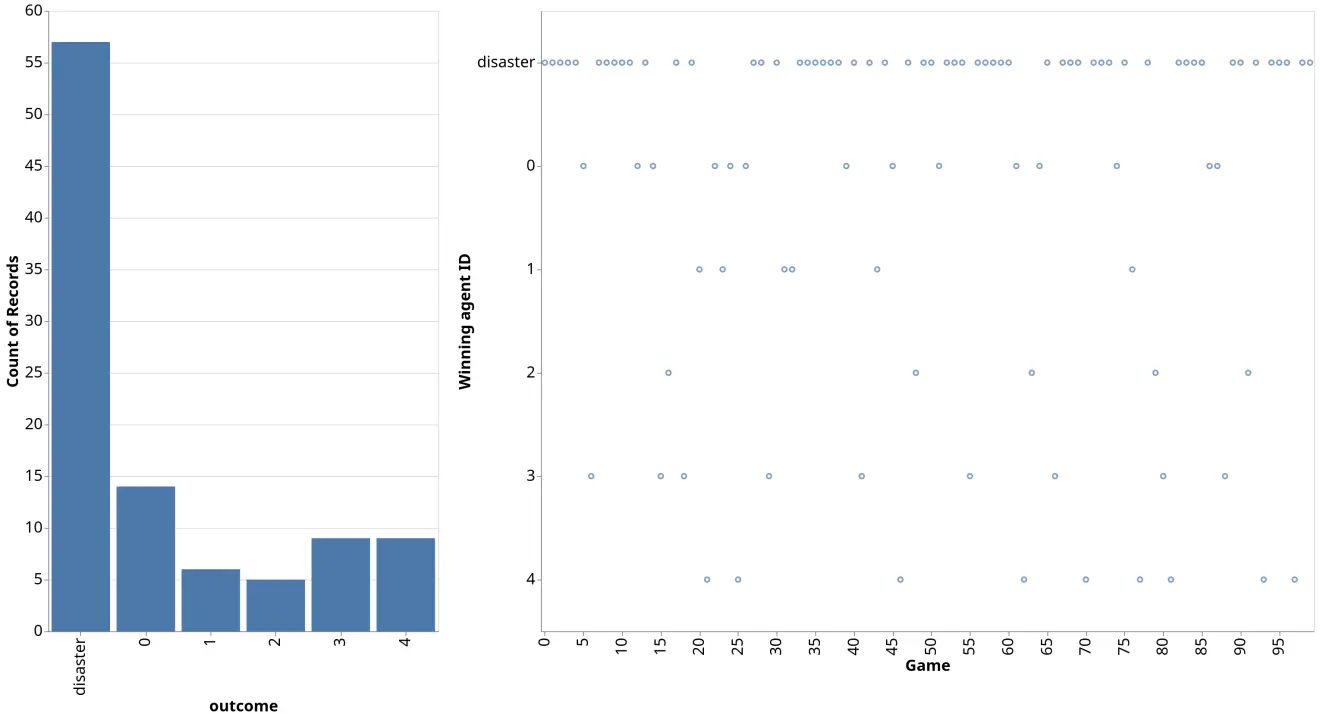

Static AI competition model

Racing to the Precipice web app

The Racing to the Precipice web app provides a static model of AI competition. It lets users run the model with custom parameters and compare different strategic assumptions.

Based on the 2016 Racing to the Precipice (RTTP) paper by Armstrong, Bostrom, and Shulman, it provides a useful baseline for the more complex tools above.

Build on this work

How we approach model implementation

Our technical overview explains how we build these tools, including reliability, composability, and long-term maintainability in computational science.