AI competition research

Research on AI competition and governance

We apply game theory and agent-based simulation to questions that are crucial for AI governance: what conditions lead developers to invest in safety, and what governance interventions are likely to withstand competitive pressure?

Our work combines game-theoretical analysis with computational modeling so that claims about AI competition dynamics can be explored quantitatively rather than only through analogy or intuition.

Competition model analysis

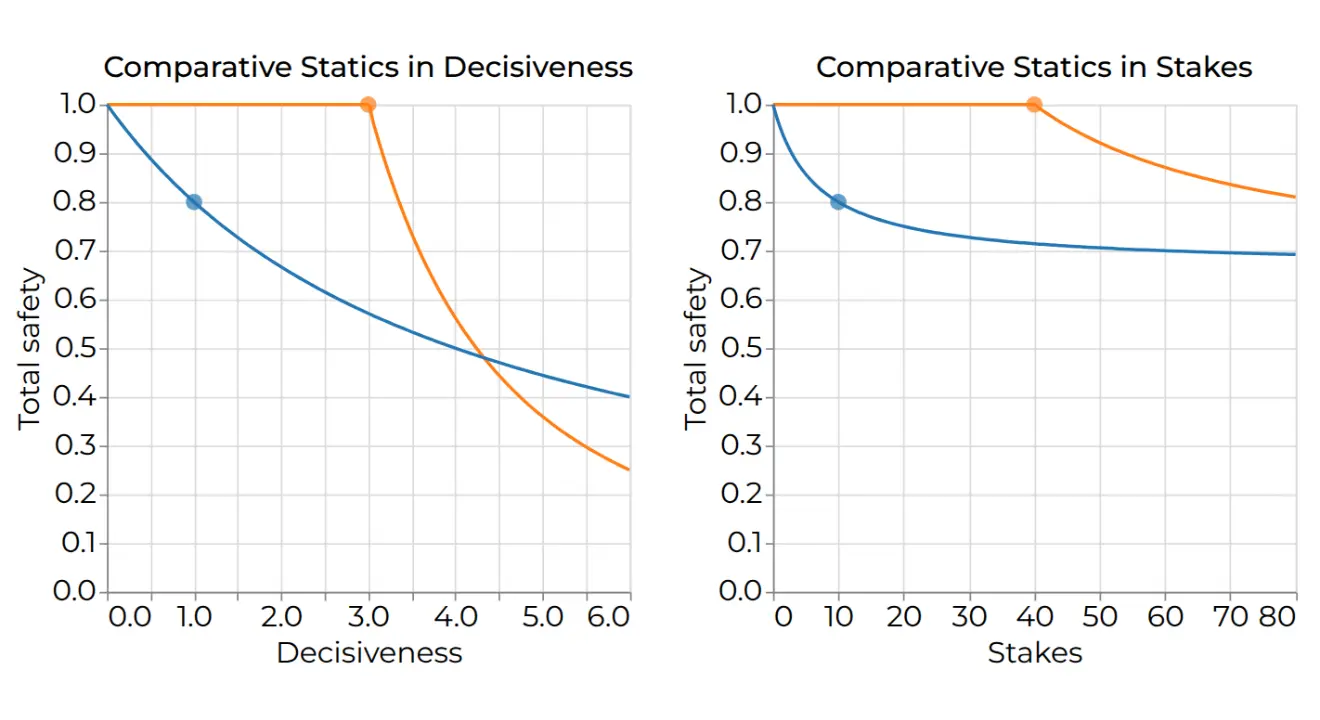

Analysis of the SPT model

We contributed to the Safety-Performance Tradeoff model by producing analytical and computational solutions for multiple variants of the model. This work led to several of the insights we published on the SPT web app, including how the possibility of a race laggard causing a disaster increases the incentive to race ahead, resulting in greater disaster risk.

Our work on the SPT model also influenced the research in Uncertainty, Information, and Risk in International Technology Races by Emery-Xu, Park, and Trager.

Policy analysis

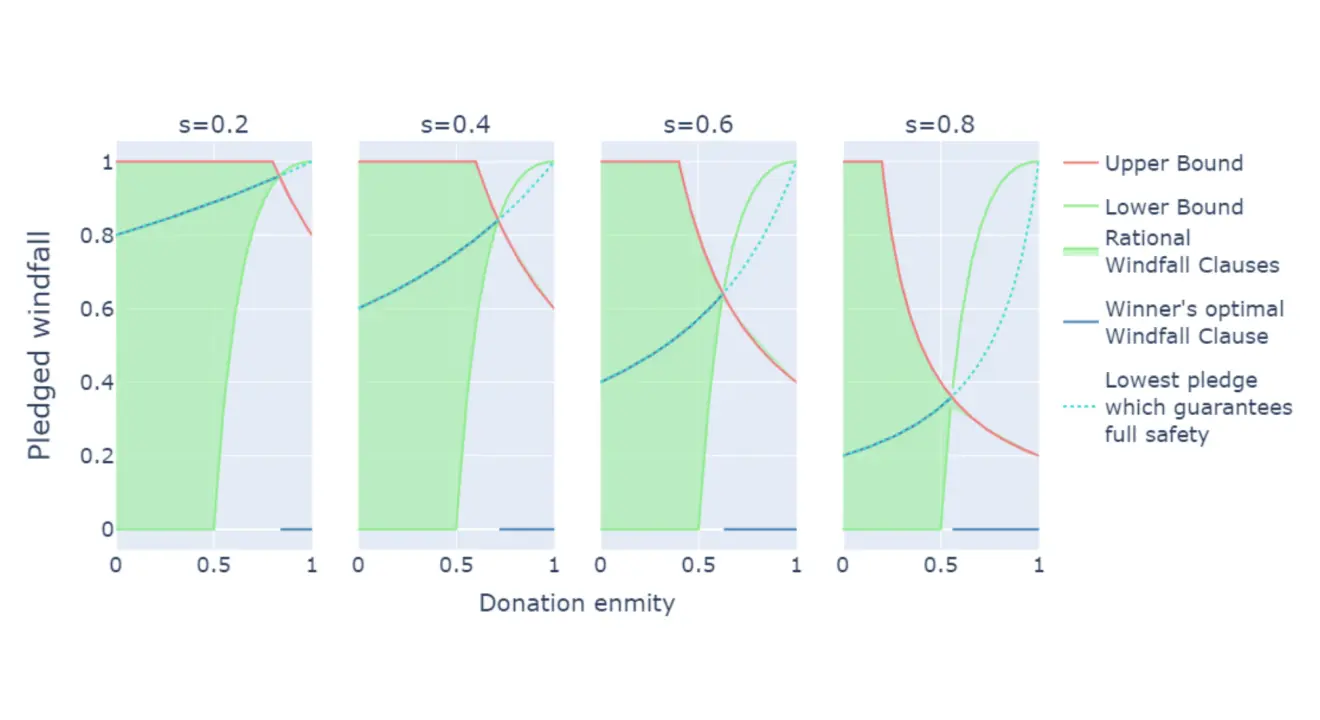

Safe Transformative AI via a Windfall Clause

This technical report extends a model of AI competition with a Windfall Clause to test whether the mechanism can remain viable under competitive pressure.

The analysis focuses on two challenges any such agreement would need to overcome: firms must have reason to join, and their commitments must be credible. The results suggest that firms can benefit from joining under a wide range of scenarios, providing evidence that a well-designed Windfall Clause could reduce risks from AI competition.

Competition model analysis

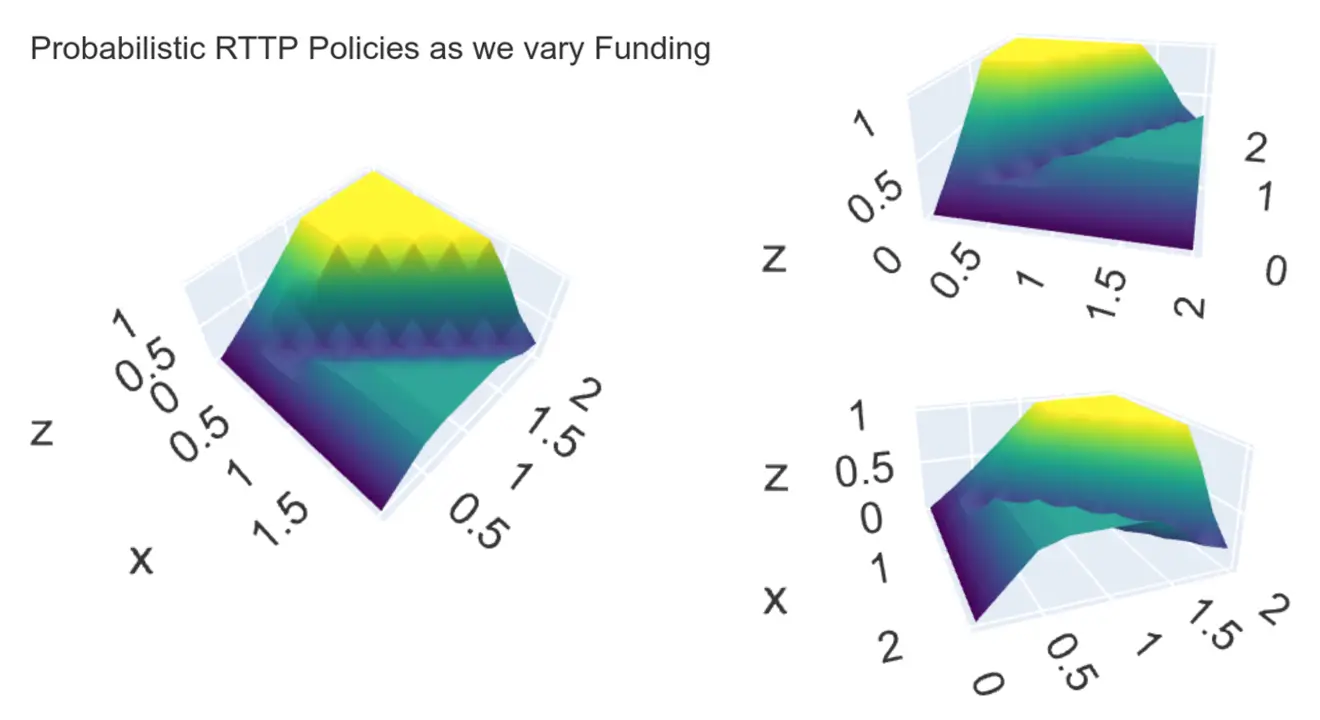

Analysis of the extended RTTP model

In the paper Racing to the Precipice (RTTP), Armstrong, Bostrom, and Shulman presented a static AI competition game. We extended that framework with a probabilistic version of the model and identified its Nash equilibrium strategies computationally.

This analysis helped clarify how uncertainty and differing marginal returns from safety investments jointly affect disaster risk. This work is available upon request.

AI competition research and tools

Related software work

Our research also feeds into software for exploring related AI competition models. One example is the Safety-Performance Tradeoff project, where we contributed to the underlying game-theoretic model and also implemented the interactive web app with Professor Robert Trager.